… but hides the full support required on the back-end!

This is important to point out for two reasons:

- Gen-AI Hype-mongers will use this as another excuse to claim most white-collar functions will be entirely eliminated when, in fact, it strengthens the need for true back-office white-collar workers and real software engineers

- Expert human support becomes more critical at each stage of the process (while bit pushers became less and less useful)

But let’s backup. In his most recent piece where he (re-)introduced the SaS Flywheel, Phil made one critical statement which is constantly overlooked by the industry: Stop treating FDE as optional: Your AI Flywheel will not spin without it.

As Phil astutely points out: the hard question nobody is answering is this: who actually wires AI into your live systems, governs it in production, and makes it keep working when the AI software vendors leave the room. The answer is, of course, your Forward Deployed Engineer (FDE) — and if your transformation strategy does not have it, you are building an AI theatre, not an AI operating model. (Which, FYI, is what most companies are building — and, as Stephen Klein astutely points out, putting on puppet shows. Great for entertainment, but not so great for getting anything done. Especially since they all overlook what AI can actually do.)

Now, a forward deployed engineer alone will not get you out of pilot purgatory, but it is an essential condition — just like you can’t climb out of a deep wide hole with smooth 90° vertical surfaces on all sides without a rope or a ladder, you can’t fly your way out of a pilot without a working plane, which you don’t have without an engineer to keep it running.

As Phil continues, FDE is not implementation – it is the engineering layer that makes AI governable this is because FDE teams build ontologies that reflect how the enterprise actually operates, wire models into real data with real permissions, and design the governance architecture that keeps autonomous systems accountable, which will, and for quite some time into the future, wire in non-overridable human oversight, approval, and review.

Phil goes on to list a few key things that LLMs cannot do on their own. (It’s in no way a complete list, but hopefully enough to get executives questioning all the AI-BS form the AI-Hype-mongers presenting grandiose claims that likely won’t be a reality within most of our professional life-times. Even better, Phil points out that Agentic AI without FDE governance is not transformation. It is risk accumulation!, and points out five key requirements of workable AI that can’t be achieved without an FDE. (There are more, but again, these should be enough key points to help executives realize that not only are LLMs sorely insufficient for almost every task they are being promoted for, but they aren’t even usable at all without the help of a FDE team.)

Phil also does us a great service by pointing out that while vibe coding creates velocity, FDE prevents it from becoming chaos — which is what happens every single time you employe vibe coding without FDEs (and a real engineering team — but we’ll get to that).

Vibe coding is simultaneously one of the biggest boons to software development and the greatest destructors, especially since it is almost universally misunderstood and misapplied. For example, while Phil’s statement that business analysts can express intent and receive working agent code in return is technically correct, it’s not practically correct. That’s because vibe coding produces code that is insecure, inefficient, and not appropriate for enterprise software. In fact, just about every startup that tried to launch an enterprise app on vibe-coding alone have lost hundreds of thousands (or more) attempting to do so — see this great post from Alex Turnbull.

Vibe Coding is super useful because, with the help of an FDE team with a good business analyst, the end user organization can quickly create functional prototypes that demonstrate precisely what they are looking for, which are much more powerful functional specifications than traditional functional specification documents with text descriptions of required functionality and powerpoint mockups. Plus, these prototype specifications can be created in a fraction of the time. But that’s all they are, prototypes. Real applications still need to be built by real software engineering teams who can build optimized, bug-free, secure code — vs. unoptimized, buggy (especially at the boundaries), and insecure code regularly generated by AI-based vibe coding tools (where, depending on what source you access, 53% to 78% of code generated has serious security issues).

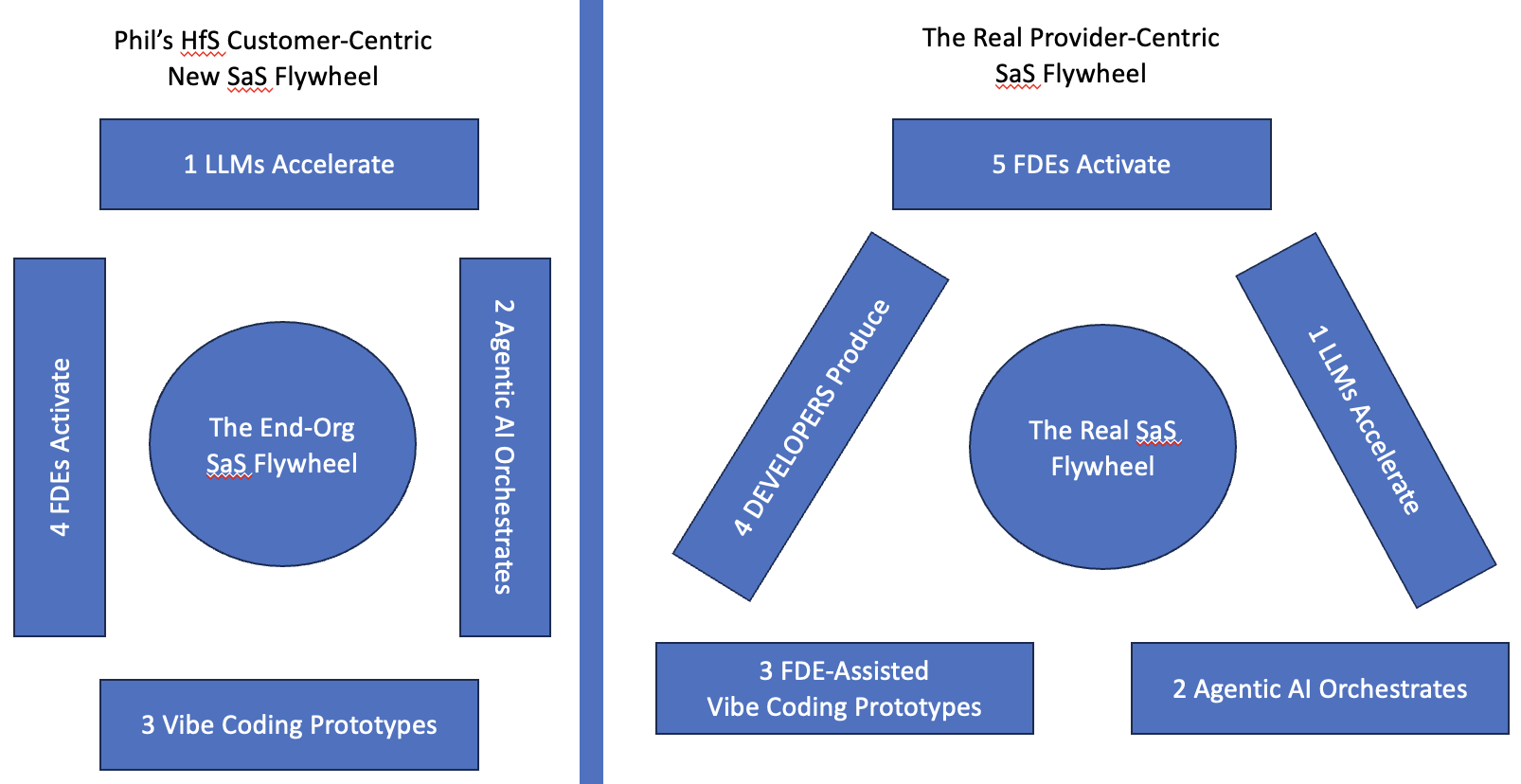

In other words, it’s a great article, from a customer-centric viewpoint and written for customer executives. From a back-end, provider perspective, it’s missing one key step — the development step that takes vibe coding prototypes and produces real (AI-backed) enterprise applications.

Moreover, it centralizes the FDE activities when, in reality, they are ongoing throughout the entire cycle.

- they activate, and put the foundation in place

- they train the users on how to properly use the LLMs for accelerated research and are always on call for help

- they maintain the orchestration layer, and improve (and correct) it as necessary

- they work with the end users to vibe code prototypes

- they work with the development team to build the next generation (or iteration) of the enterprise apps in the SaS model

In other words, AI can enhance SaS, but it cannot replace the need for skilled humans on the provider side (for development, implementation, maintenance, and improvement) or the buyer side (for process definition, improvement, decision criteria, etc.).

At the end of the day, AI can only replace bit-pushers who do tactical data processing tasks which should have been automated by machines 30 years ago (when it was promised), but it can’t replace anyone who needs to make a (strategic) decision. This is true regardless of the model, and the right model, like Phil’s SaS flywheel, actually exemplify the need for the right, skilled, talent.